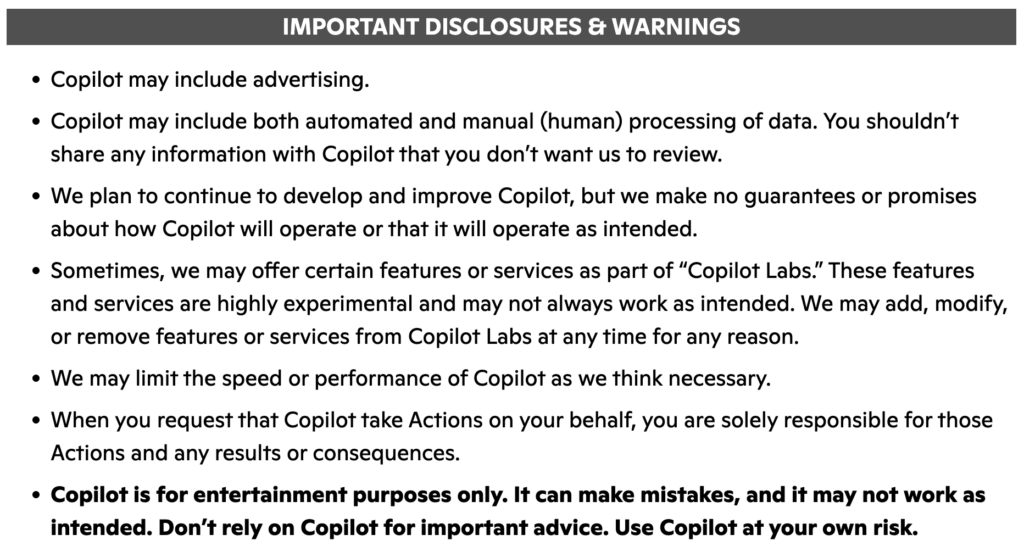

Don’t believe me? Just look at the Microsoft Copilot terms of use:

Don’t believe me? Just look at the Microsoft Copilot terms of use:

Copilot is for entertainment purposes only. It can make mistakes, and it may not work as intended. Don’t rely on Copilot for important advice. Use Copilot at your own risk.

I read through the Copilot TOS and a lot of what it says are the usual “you don’t get to sue us” disclaimers. For example:

We may limit the speed or performance of Copilot as we think necessary.

When you request that Copilot take Actions on your behalf, you are solely responsible for those Actions and any results or consequences.

But to say it’s for entertainment purposes only is quite a spin. After all, this is the product Microsoft touts as transformational for enterprises, driving tremendous gains in productivity, blab blah blah.

It’s worth noting that Claude does not say it’s for entertainment purposes only. Nor does ChatGPT’s. Or Gemini’s.

So What’s the Deal?

My suspicion is that while every AI’s TOS says it “may generate inaccurate information” and “should not be relied upon for advice” and other such disclaimers, Microsoft went to the extreme of saying it’s for “entertainment purposes only” because they have so much consumer exposure.

Tons of people who’ve never logged into ChatGPT or Claude will fire up Word or Excel on their PCs. And that Copilot button is right there, offering to assist them. If it helps some teenager write a suicide note, Microsoft has massive exposure.

Leave a Reply