GitHub has announced that going forward, your data on GitHub will be used to train Microsoft’s Copilot LLM.

GitHub has announced that going forward, your data on GitHub will be used to train Microsoft’s Copilot LLM.

From April 24 onward, interaction data—specifically inputs, outputs, code snippets, and associated context—from Copilot Free, Pro, and Pro+ users will be used to train and improve our AI models unless they opt out. Copilot Business and Copilot Enterprise users are not affected by this update.

I wonder if they used Copilot to write this announcement? I mean, those em-dashes…

Anyway, according to GitHub, everybody else is doing it, so they will, too.

This approach aligns with established industry practices and will improve model performance for all users.

Yeah.

By participating, you’ll help our models better understand development workflows, deliver more accurate and secure code pattern suggestions, and improve their ability to help you catch potential bugs before they reach production.

Uh huh.

What a Messy Announcement

So at first read, it sounds like if you don’t use Copilot (and really, who does?) your data won’t be absorbed.

But then it goes on to talk about repos’ “file names, repository structure, and navigation patterns”. It also notes that “the data used in this program may be shared with GitHub affiliates”. Ugh!

According to the announcement, not everything is included. Specifically not included is

Content from your issues, discussions, or private repositories at rest. We use the phrase “at rest” deliberately because Copilot does process code from private repositories when you are actively using Copilot. This interaction data is required to run the service and could be used for model training unless you opt out.

Obviously, public repos’ data is used because, well, it’s public.

How to Opt Out

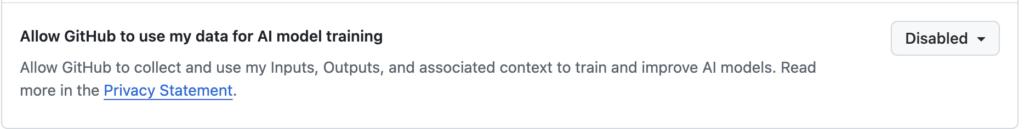

Head over to your GitHub settings and look for this, under “Privacy”:

Should You Opt Out?

I guess this is a personal choice. Personally, the announcement itself made me want to opt out. It went from “Copilot users” to “metadata in your repo” to “we may share this with third parties” in only a few paragraphs.

I expect that if I used Copilot – and to be honest, I always forget that product still exists when people talk about AI – my inputs would be fed back into the model. But I expect my private repo to be, well, private, not scanned to learn about “file names, repository structure, and navigation patterns”.

So I opted out. Whether that is truly honored…

In the mean time, maybe GitHub could take action on the nulled WHMCS repo I reported more than two years ago.

Leave a Reply